A Study of Clustering Techniques and Hierarchical Matrix Formats for Kernel Ridge Regression

Journal Article

·

· Proceedings - IEEE International Parallel and Distributed Processing Symposium (IPDPS)

- Univ. of Michigan, Ann Arbor, MI (United States). Dept. of Mathematics

- Lawrence Berkeley National Lab. (LBNL), Berkeley, CA (United States). Computational Research Division

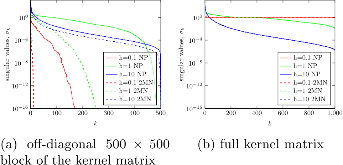

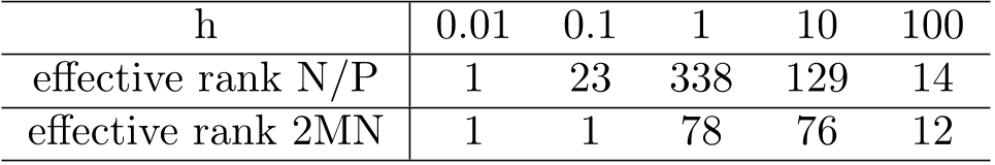

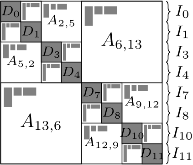

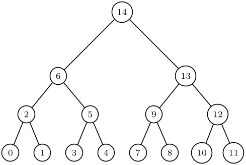

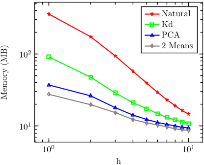

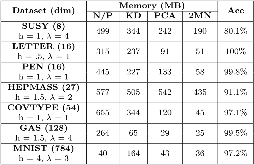

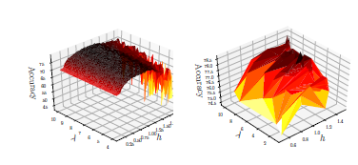

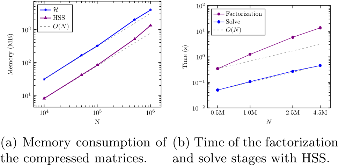

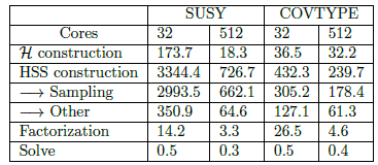

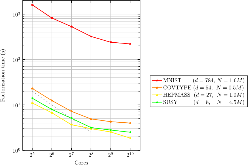

We present memory-efficient and scalable algorithms for kernel methods used in machine learning. Using hierarchical matrix approximations for the kernel matrix the memory requirements, the number of floating point operations, and the execution time are drastically reduced compared to standard dense linear algebra routines. We consider both the general H matrix hierarchical format as well as Hierarchically Semi-Separable (HSS) matrices. Furthermore, we investigate the impact of several preprocessing and clustering techniques on the hierarchical matrix compression. Effective clustering of the input leads to a ten-fold increase in efficiency of the compression. The algorithms are implemented using the STRUMPACK solver library. These results confirm that - with correct tuning of the hyperparameters - classification using kernel ridge regression with the compressed matrix does not lose prediction accuracy compared to the exact - not compressed - kernel matrix and that our approach can be extended to O(1M) datasets, for which computation with the full kernel matrix becomes prohibitively expensive. We present numerical experiments in a distributed memory environment up to 1,024 processors of the NERSC's Cori supercomputer using well-known datasets to the machine learning community that range from dimension 8 up to 784.

- Research Organization:

- Lawrence Berkeley National Laboratory (LBNL), Berkeley, CA (United States)

- Sponsoring Organization:

- USDOE Office of Science (SC)

- Grant/Contract Number:

- AC02-05CH11231

- OSTI ID:

- 1563957

- Journal Information:

- Proceedings - IEEE International Parallel and Distributed Processing Symposium (IPDPS), Journal Name: Proceedings - IEEE International Parallel and Distributed Processing Symposium (IPDPS) Vol. 2018; ISSN 1530-2075

- Publisher:

- IEEECopyright Statement

- Country of Publication:

- United States

- Language:

- English

Preparing sparse solvers for exascale computing

|

journal | January 2020 |

Similar Records

Efficient scalable algorithms for hierarchically semiseparable matrices

STRUMPACK -- STRUctured Matrices PACKage

Approximate l-fold cross-validation with Least Squares SVM and Kernel Ridge Regression

Journal Article

·

Wed Sep 14 00:00:00 EDT 2011

· SIAM J. Scientific Computing

·

OSTI ID:1052181

STRUMPACK -- STRUctured Matrices PACKage

Software

·

Mon Dec 01 00:00:00 EST 2014

·

OSTI ID:1328126

Approximate l-fold cross-validation with Least Squares SVM and Kernel Ridge Regression

Conference

·

Mon Dec 31 23:00:00 EST 2012

·

OSTI ID:1111451